Add HTTP Caching to the Episerver Content Delivery API

Leverage the power of Content Delivery Networks to cache data and scale your Episerver site globally

HTTP Caching is a powerful tool for expanding the global reach of your site without having to deploy servers into multiple regions. It enables you to leverage Content Delivery Networks (CDN) and device caches effectively to limit the requests that need to go back to the server, thus improving your site performance.

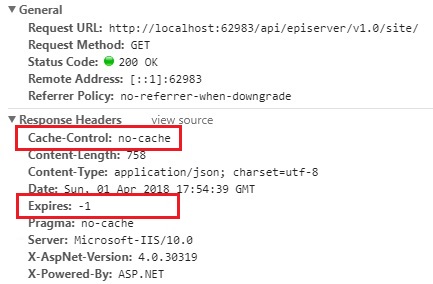

If you’ve taken the Episerver Content Delivery API for a spin, you might have noticed that all requests return a Cache-Control: no-cache header.

For example, a request to the Site Definition API looks like this in the browser:

You’ll also notice the Expires header with a negative value, indicating again that the response isn’t cache-able by the browser, or by Content Delivery Networks (CDNs) like Cloudflare or Akamai.

But, if HTTP caching is so powerful, why isn’t it implemented by default?

The truth is, determining which content in Episerver can and can’t be cached is already a complex endeavour. As developers, we’ve grown accustomed to relying on the cache that IContentLoader provides, as well as the built-in event hooks that clear that cache and keep Episerver Find up to date as we publish new content on our sites.

However, we often run into challenges with ensuring we don’t cache personalized content or content which is only accessible to authenticated users as we build our own APIs and features on top of Episerver.

If the Content Delivery API made assumptions about how long browsers or CDNs should cache your responses, and set aggressive caching headers as a result, then we quickly run into the scenario we all dread:

I published an update to the home page, but I just looked on my phone and it’s still the old content. I thought this was supposed to just work?

– Your editors, probably

I think we’ve all gotten a message similar to this one, for one reason or another. In a way, the choice to defer the HTTP caching implementation ensures that in most scenarios, content updates are pushed out to the Content Delivery API in a timely manner.

Shall we dig deeper?

If we look under the hood of the Content Delivery API, we see that it’s largely driven by 3 Web API controllers, one for each endpoint:

EPiServer.ContentApi.Controllers.ContentApiController

EPiServer.ContentApi.Search.Controllers.ContentApiSearchController

EPiServer.ContentApi.Controllers.SiteDefinitionApiControllerContentApiController largely wraps many of the operations of IContentLoader, and therefore inherently leverages the Episerver object cache, complete with support for clearing that cache when content is published. At the same time, IContentLoader also returns personalized content, so even an anonymous user might receive a different response than the next anonymous user.

ContentApiSearchController provides a way to query content within Episerver Find, and contains a built in personalize parameter for toggling personalized content in responses. By default, anonymous responses are returned directly from Episerver Find, bucking personalization in favor of performance.

If the personalize flag is passed in as true, then content is loaded and returned via IContentLoader rather than projected directly from the index in order to fetch personalized responses. The Content Search API also caches Find responses for 30 minutes by default (configurable in ContentSearchApiOptions), which means even if new content is published in Episerver, it may not immediately show up in responses from the Search endpoint.

If things weren’t looking complex enough yet, the expand parameter enables developers to request that certain reference properties be expanded into full object responses to prevent the need for making many requests back to the server to load referenced data. Therefore, if a Page contains a Content Area that references 10 Blocks, there are 11 separate pieces of content that must be tracked in order to set an accurate Cache-Control header if expand is used.

At this point, it’s clear that determining a universal HTTP caching strategy would be fairly complex, and so Episerver has opted to defer that to developers to decide during individual implementations.

Fear not, you still have control.

Even though the Content Delivery API doesn’t automatically add a public Cache-Control header, you still have control in your implementation thanks to Web API. Action Filters provide a simple way to inspect and modify Request and Response objects, and adding one to work with the Content Delivery API is simple.

For example, here is a simple Action Filter which adds Cache-Control: public, max-age=86400 to all anonymous requests to the Content Delivery API:

Normally, an Action Filter is added to each controller by attaching it as an attribute above the class declaration. Since these controllers don’t belong to you, you’ll have to take a more global approach.

In your Initialization module where you are configuring Web API, add an additional line to add the ActionFilter to the global Filters collection, which configures it to run on each Web API request.

GlobalConfiguration.Configure(config =>

{

config.IncludeErrorDetailPolicy = IncludeErrorDetailPolicy.LocalOnly;

config.Formatters.JsonFormatter.SerializerSettings = new JsonSerializerSettings();

config.Formatters.XmlFormatter.UseXmlSerializer = true;

config.DependencyResolver = new StructureMapResolver(context.StructureMap());

config.MapHttpAttributeRoutes();

config.EnableCors();

// The above is likely there already, but add this line:

config.Filters.Add(new ContentDeliveryApiCacheActionFilter());

});Since these global filters run on every Web API request, the above example provides some logic to ensure it only applies to Content Delivery API requests by checking the controller types.

Obviously, this is a simple example that provides rather aggressive caching, but it’s largely up to you as a developer to determine what you can and can’t cache. For example, we could expand our logic to only apply to certain routes or methods within the API, or even to specific pieces of content. The relevant information to make these decisions is available within the HttpActionExecutedContext instance which is passed to the filter.

Every implementation is different, but remember:

- Understand how your editors will be affected if you set aggressive caching rules on certain pieces of content

- Even anonymous content can be customized via personalization in Episerver, so be mindful before you cache it

- Don’t set public

Cache-Controlheaders on authenticated content that passes through a CDN. This is a recipe for accidental data leaks.

Working with your CDN

There’s not much good to setting more aggressive Cache-Control headers on your API responses if they aren’t respected by your Content Delivery Network. Cloudflare and Akamai both provide the ability to cache API or Page responses on their edge servers, vastly improving the global scalability of your site by bringing content closer to your users, and by limiting the request volume that goes back to Episerver.

If you are a DXC customer, you’re already using Cloudflare, even though you may not be aware of it. By default, Cloudflare is aggressively caching static assets, but it won’t cache requests to pages or API responses unless you setup a “Page Rule”.

If you submit a support ticket, Episerver support can assist with the setup of a Page Rule to ensure that your Cache-Control headers are respected for Content Delivery API responses, and that your responses are cached on Cloudflare’s edge servers when it’s appropriate.

It’s important to limit the scope of your page rules, so it’s a good approach to specify paths for your page rules. The Content Delivery API routes all fall under the following paths:

/api/episerver/v1.0/content/

/api/episerver/v1.0/search/

/api/episerver/v1.0/site/

Cloudflare provides additional options for controlling the cache, including s-max-age support for differentiating browser and CDN caching, as well as support for ETag headers, which provide more granular support for cache-busting via the If-None-Match header.

Akamai contains similar features for caching full pages or API responses. At a high level, implementing something similar in Akamai requires setting up rules that respect your Cache-Control headers within Luna Control Center, and ensuring that responses from your origin server have the correct headers attached.

Bringing things to the last mile

When combined with a Content Delivery Network, HTTP Caching can be a powerful tool to make your site more globally scalable. Whether working with the Content Delivery API, or your own custom built APIs in Episerver, consider leveraging a CDN and implementing proper caching headers to improve performance for your users.